Every other AI pentester asks you to trust their prompt engineering with your production database, your secrets, and your customer data. We don't ask for trust. We ship a machine-checked proof with every action the agent takes. If it's out of scope, the code literally cannot run.

Trusted by industry leaders

Why you need an AI hacker

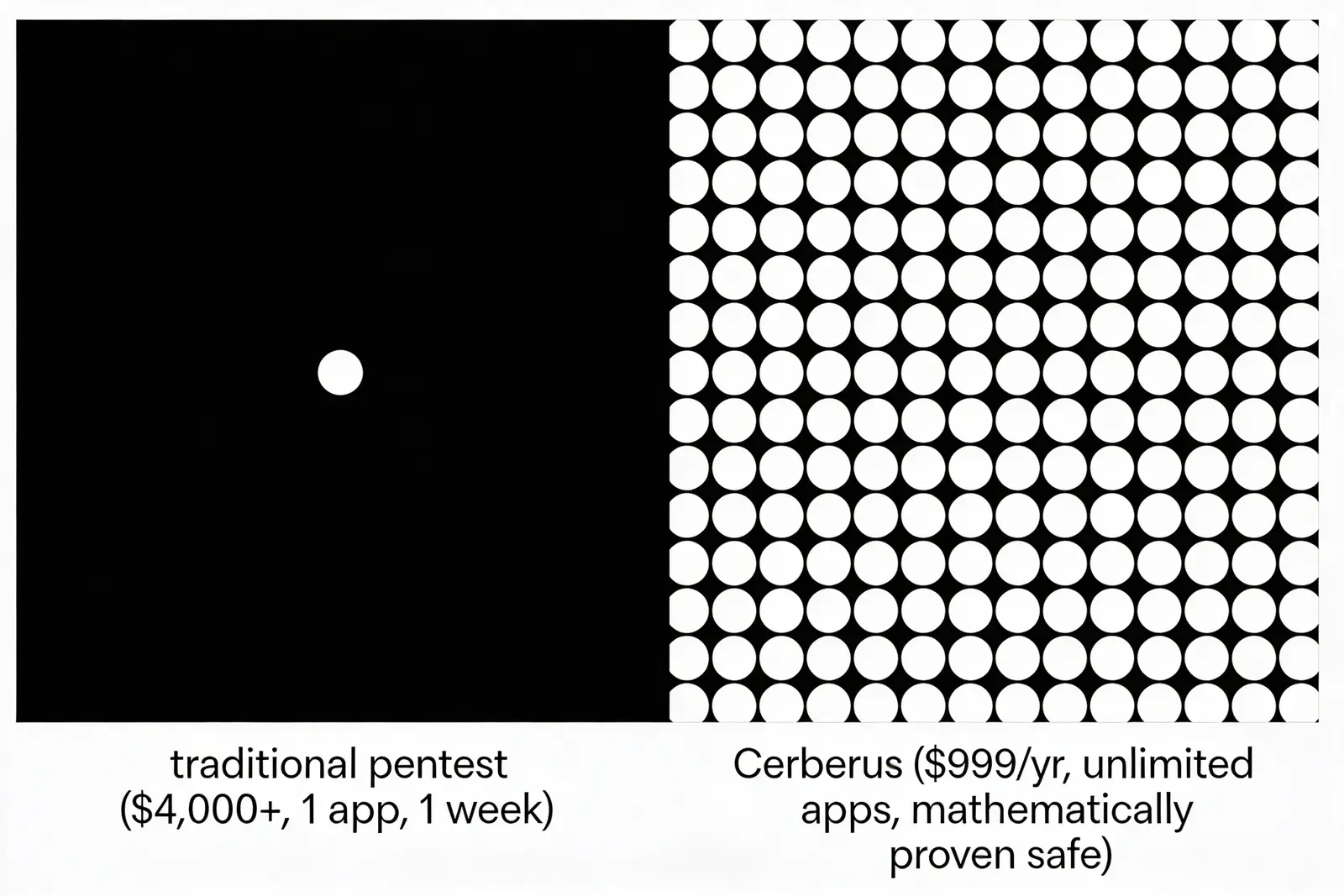

Every week another company gets breached. The standard answer — a manual pentest from a consultancy — costs $80k–$200k, takes six weeks, covers 5% of your surface area, and happens twice a year. Between those two snapshots you are flying blind.

1%

of the cost of a human pentest.

An AI hacker changes the math. Continuous testing, every day, across everything you own. That's not an efficiency gain. That's a different category of defense. The only question left is: can you actually trust one near your production systems?

The problem with every other AI hacker

Hand an LLM your production credentials, your architecture, your vulnerability data — and hope.

Hope it doesn't hallucinate a DROP TABLE. Hope it doesn't exfiltrate the secret it just found to its own logs. Hope it doesn't move laterally past the subnet you told it to stay inside. Hope the "guardrails" hold when the model decides, at 3am, that the fastest path to the flag is through your payment service.

Ask any of them what mathematically prevents destructive action in production, and the answer is the same:

"we tuned the prompts."

That is not an answer you can give your board. It is why every AI pentest tool sold to date lives in staging, starved of the exact production context that would make it worth the license fee.

Safety by construction, not by promise

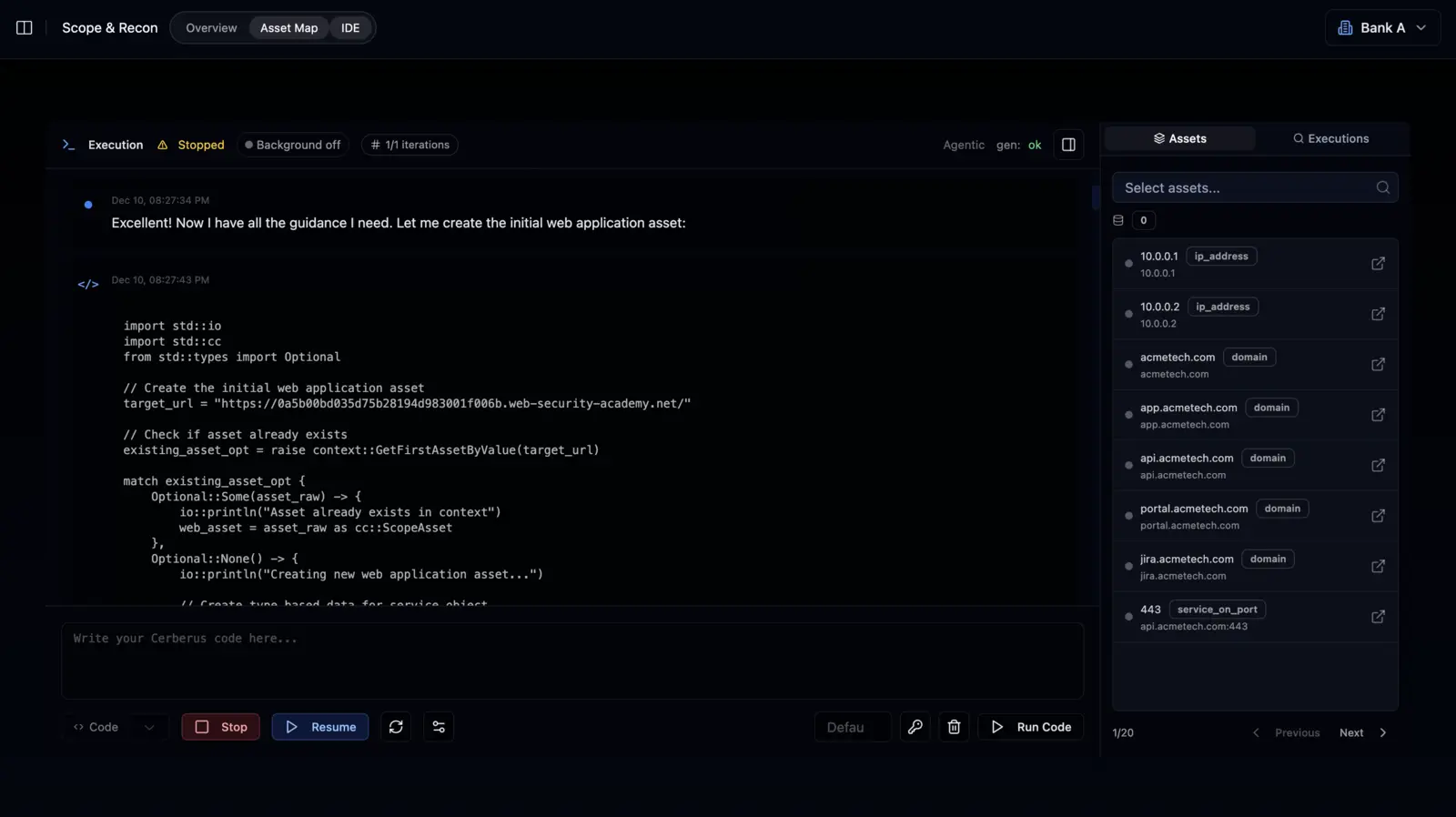

We invented something new in programming language theory: a way to represent AI hacking actions as mathematical restrictions the agent physically cannot violate. Every action our AI agent takes is written in a language we built for exactly this purpose — Cerberus Lang.

No valid proof → no execution

The compiler refuses to emit code that could violate scope. If DDoS isn't allowed, it isn't unlikely — it is provably impossible for the code to cause one.

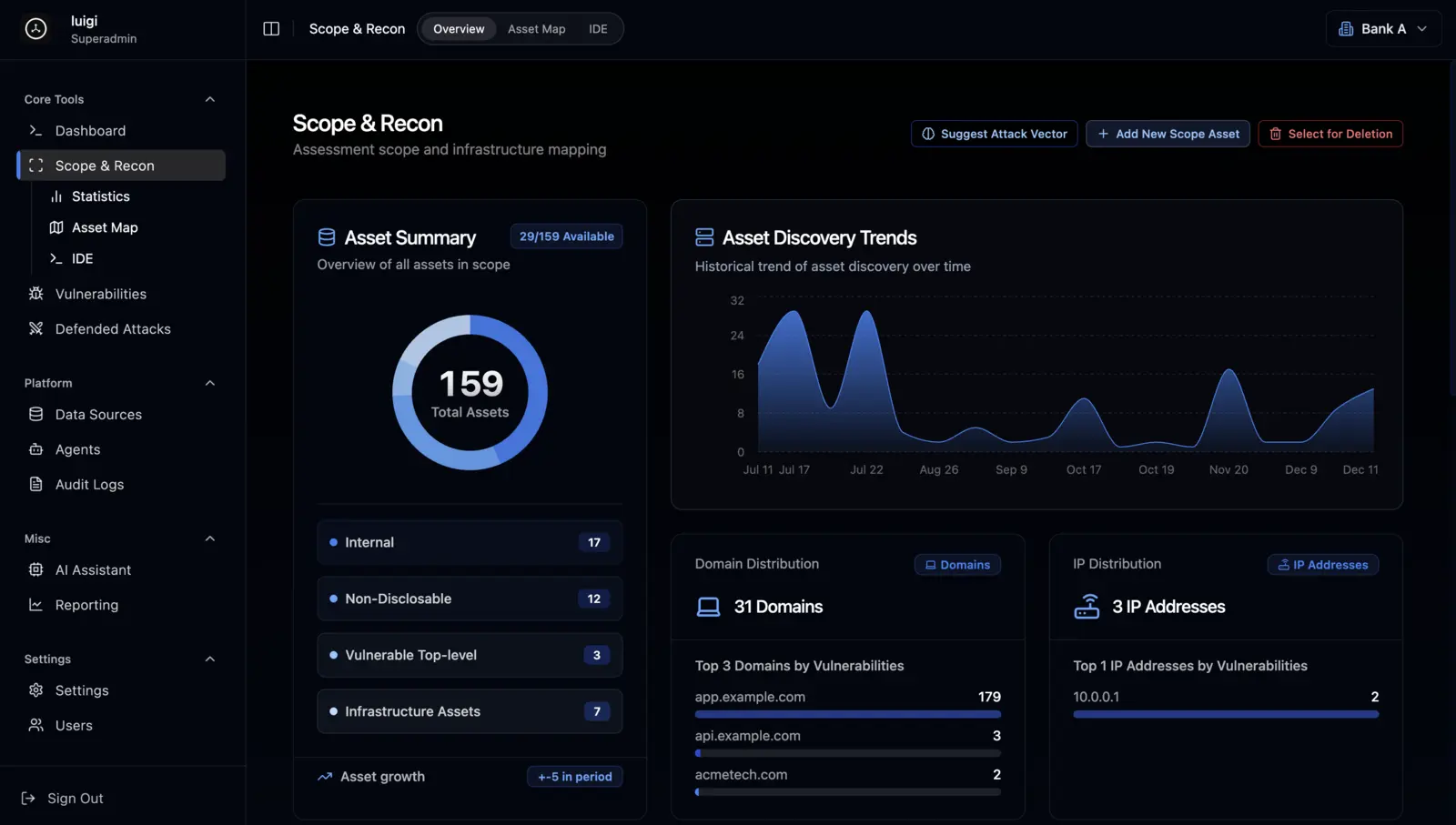

You define scope, once

In-bounds, out-of-bounds, destructive-write rules, lateral limits. Becomes a formal specification inside the compiler.

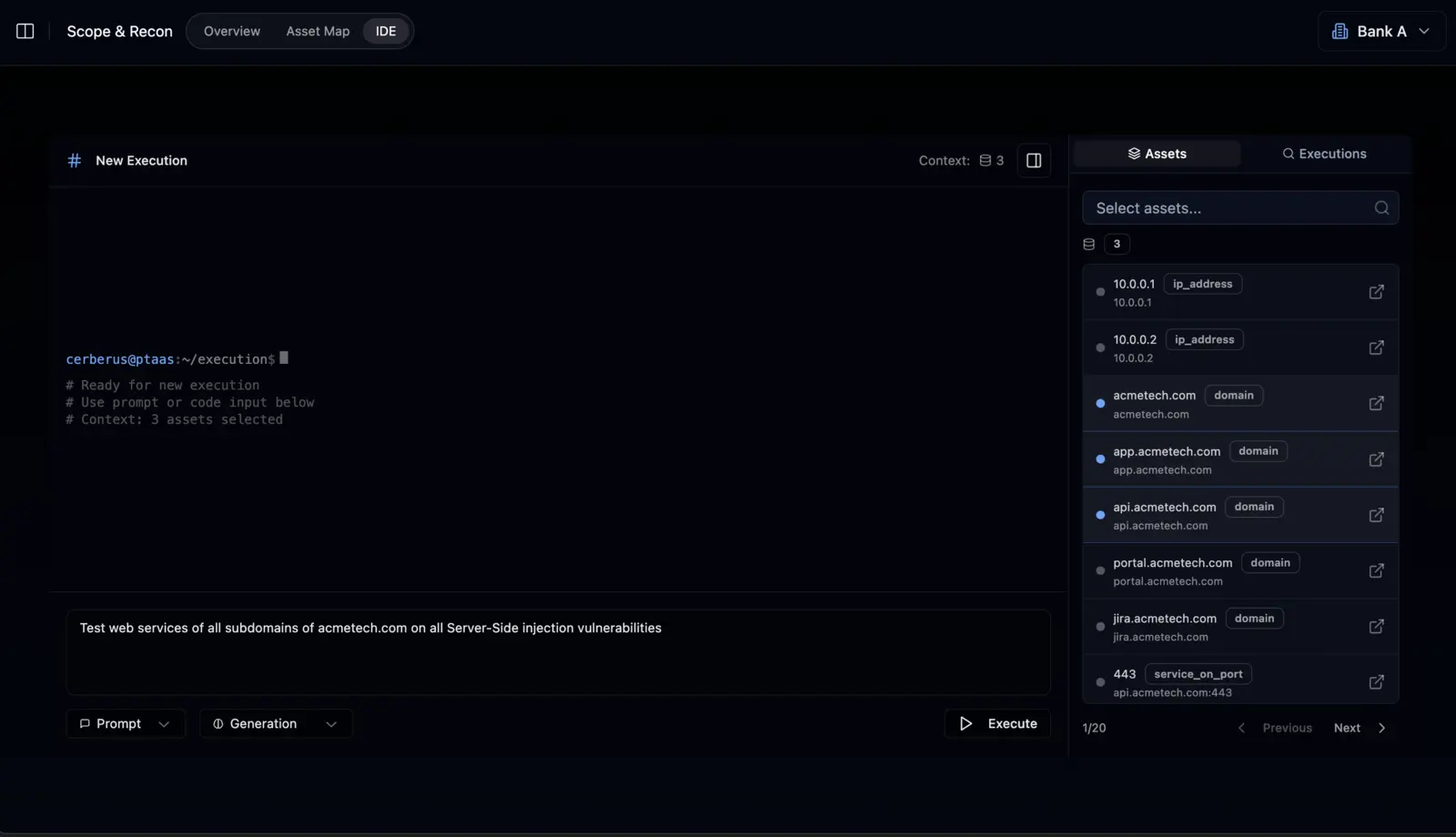

You give a natural-language objective

"Find vulnerabilities in example.com." The agent translates it into Cerberus Lang, proves each action against your scope, then runs.

Your team watches live

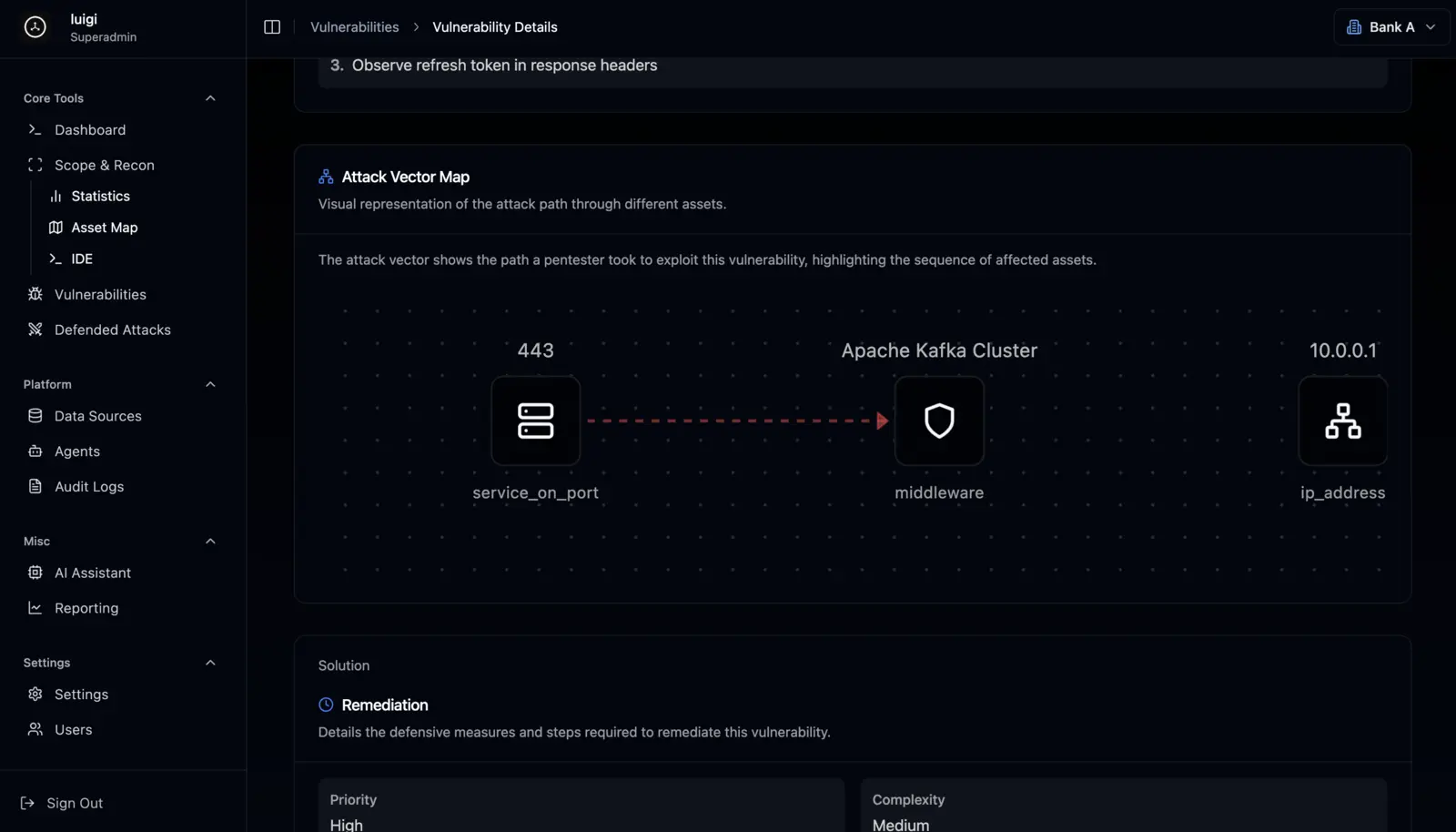

Attack tree, current hypothesis, findings as they land — in a collaborative workspace built for security teams, not a chat window.

You ship a client-ready report the same day

With a full proof trace of every action the agent took and every action the compiler blocked.

100% control.

You are not handing the keys to a black box.

Attestable

Every action is auditor-ready. Proof artifacts for every command executed.

Interruptible

Pause, redirect, or take manual control at any point in the collaborative environment.

Enforced

Scope is enforced by the compiler, not a policy PDF. You are relying on mathematics.

Built for enterprise

On-premise & air-gapped deployment

Your data never leaves your network.

Local models supported

Run against your own LLM infrastructure. Zero cloud dependency.

Custom agents

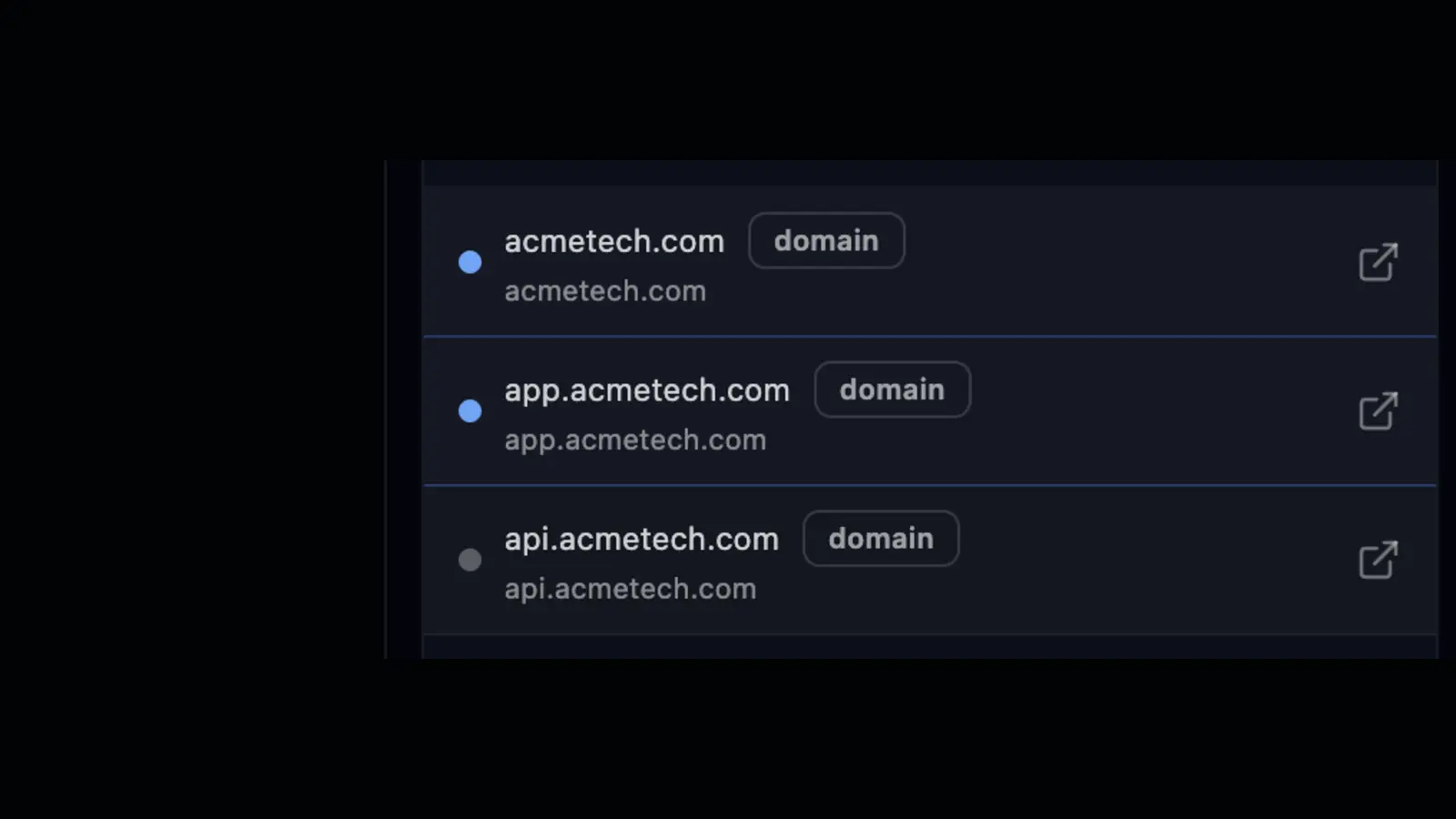

Write short Cerberus Lang scripts that replace your EASM, CVE monitor, DAST scanner, and asset discovery tools.

Collaborative workspace

Your five-person security team does the work of fifty.

VSCode extension

Developers fix vulnerabilities at write-time, not sprint-later.

CI/CD integrations

Every deploy gets pentested before it reaches production.

SAST/DAST with working PoCs

Not a list of "maybes." Real exploits you can hand to engineering.

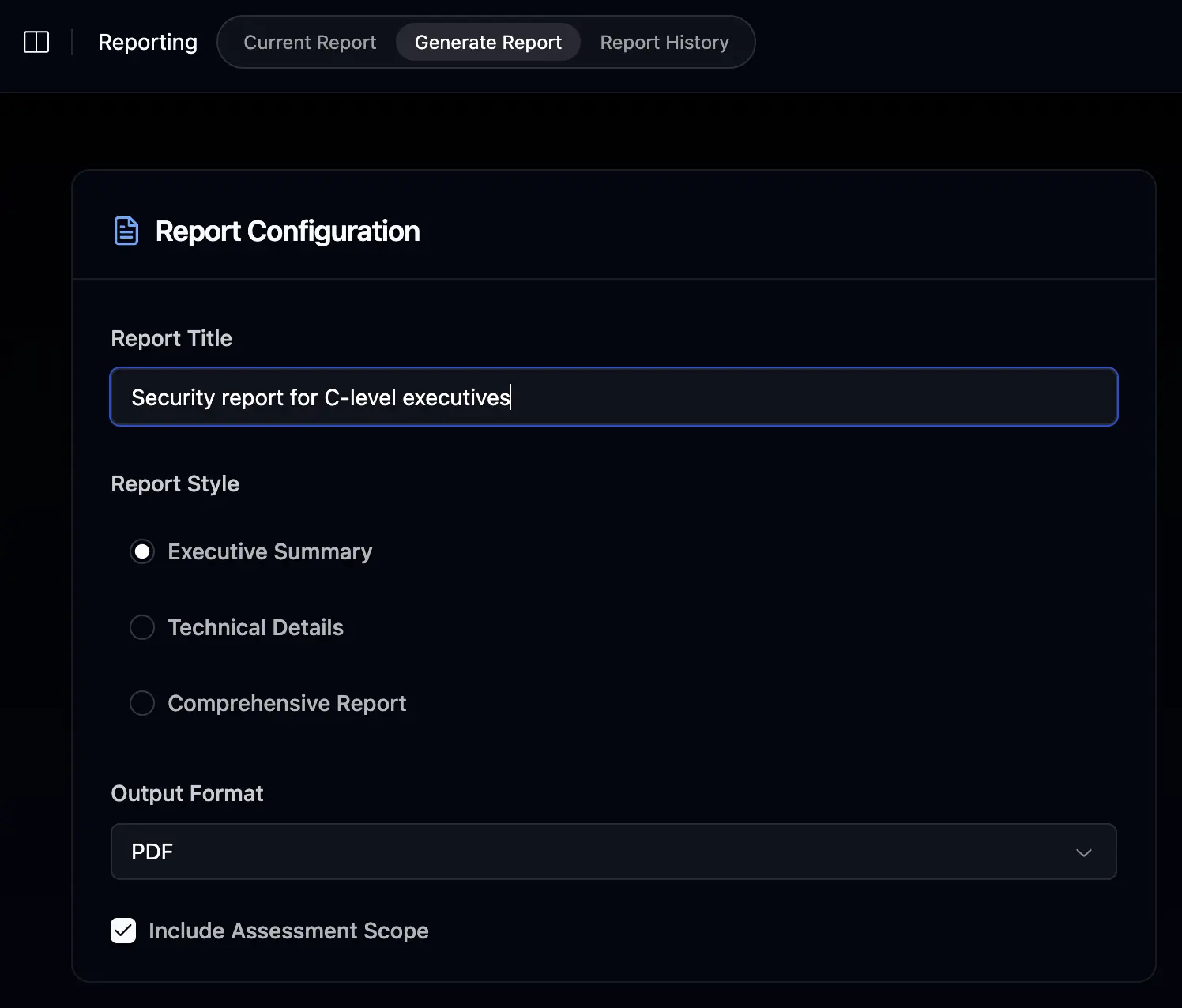

Auto-generated reports

Executive, technical, and regulator-ready versions from one run.

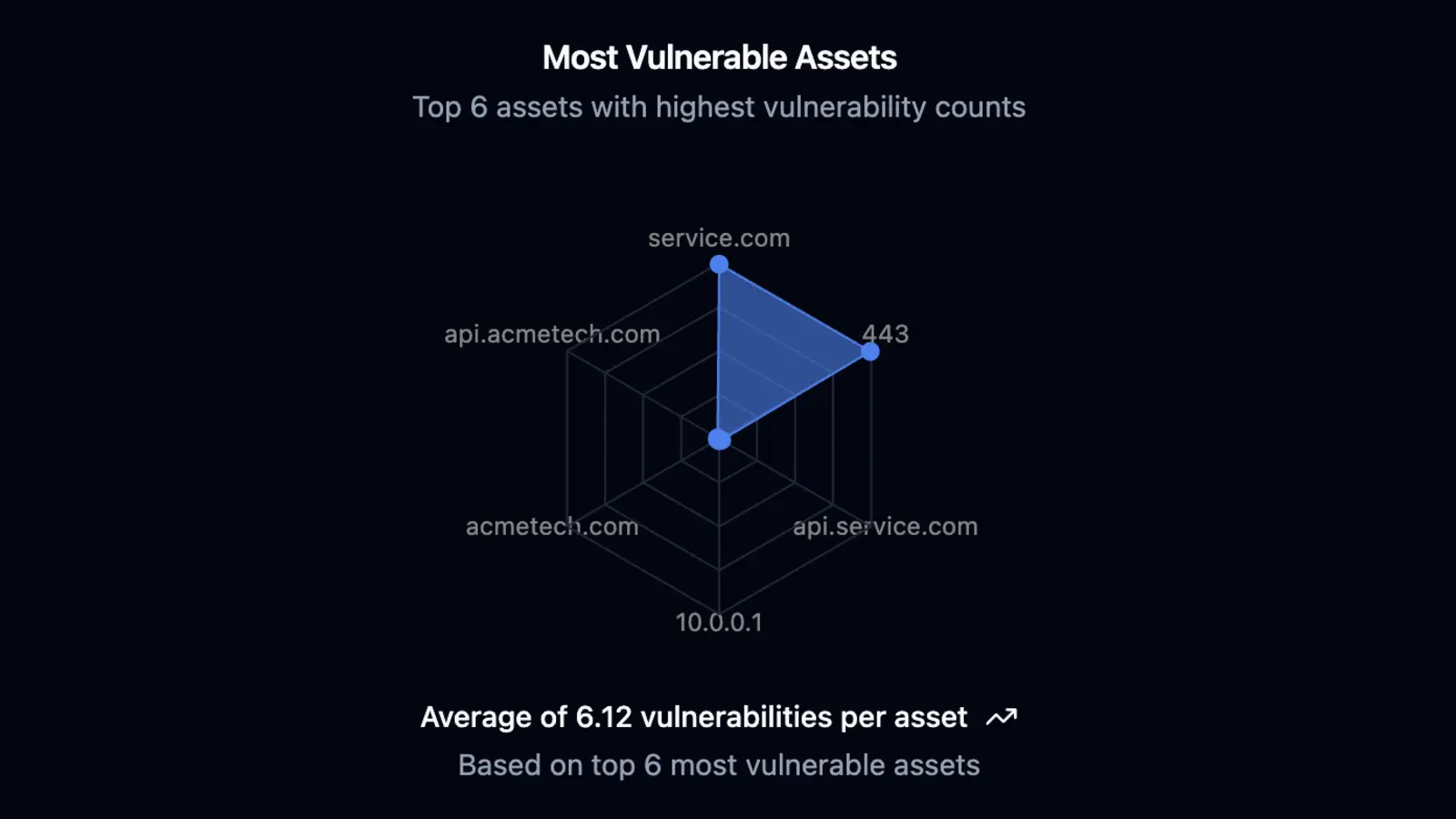

Automated Pentesting

Forget traditional vulnerability scanners that just flag potential issues. Cerberus launches actual AI-driven penetration tests that run for days, weeks, or months — writing real exploit code in our language, executing attacks, adapting to defenses, and generating proof-of-concept evidence. This isn't scanning. This is autonomous hacking at scale.

Backed by real research

This isn't a marketing claim dressed up in math vocabulary. Cerberus Lang and the proof-carrying execution model are grounded in original research in type theory and formal verification — the kind of work that has to be published, peer-reviewed, and reproduced.

No other AI pentest vendor has this background on their team. It is the reason we can ship what we ship.

What you'll see on the demo

25 minutes. A co-founder, not an SDR.

We try to break it on camera.

Live, we tell the agent to do something destructive. You watch the compiler reject it with a readable proof explaining why the action could not exist.

We run a real pentest on the same target.

Formally attested execution trace, start to finish.

The full collaborative workflow end-to-end.

Natural language in → live attack tree → findings → auto-generated client-ready report with proof log attached.

You leave with:

Pricing

Start with proof-carrying security today. Scale when you're ready.

Automated

Founders & SMBs

Cloud

- Proof-carrying execution

Professional

Pentesters & security teams

Cloud

- Collaborative workspace

- Cerberus Lang + custom agents

- Partial EASM / DAST / CVE

- Proof-carrying execution

Ready to get started?

Fill out the form to purchase Professional, request an Enterprise quote, or ask us anything. A co-founder replies within 2 hours on weekdays.

Want to see Cerberus live first? Book a 25-minute demo with a co-founder — no SDRs, no drip sequences.

Contact us

For purchases, enterprise quotes, or general inquiries.

A co-founder replies within 2 hours on weekdays. No drip sequences. No SDRs.